The attributes of autonomy as presented in this paper are categorised into low-level and high-level attributes.

This paper seeks to review different notions of machine autonomy and presents a definition of autonomy and its attributes.

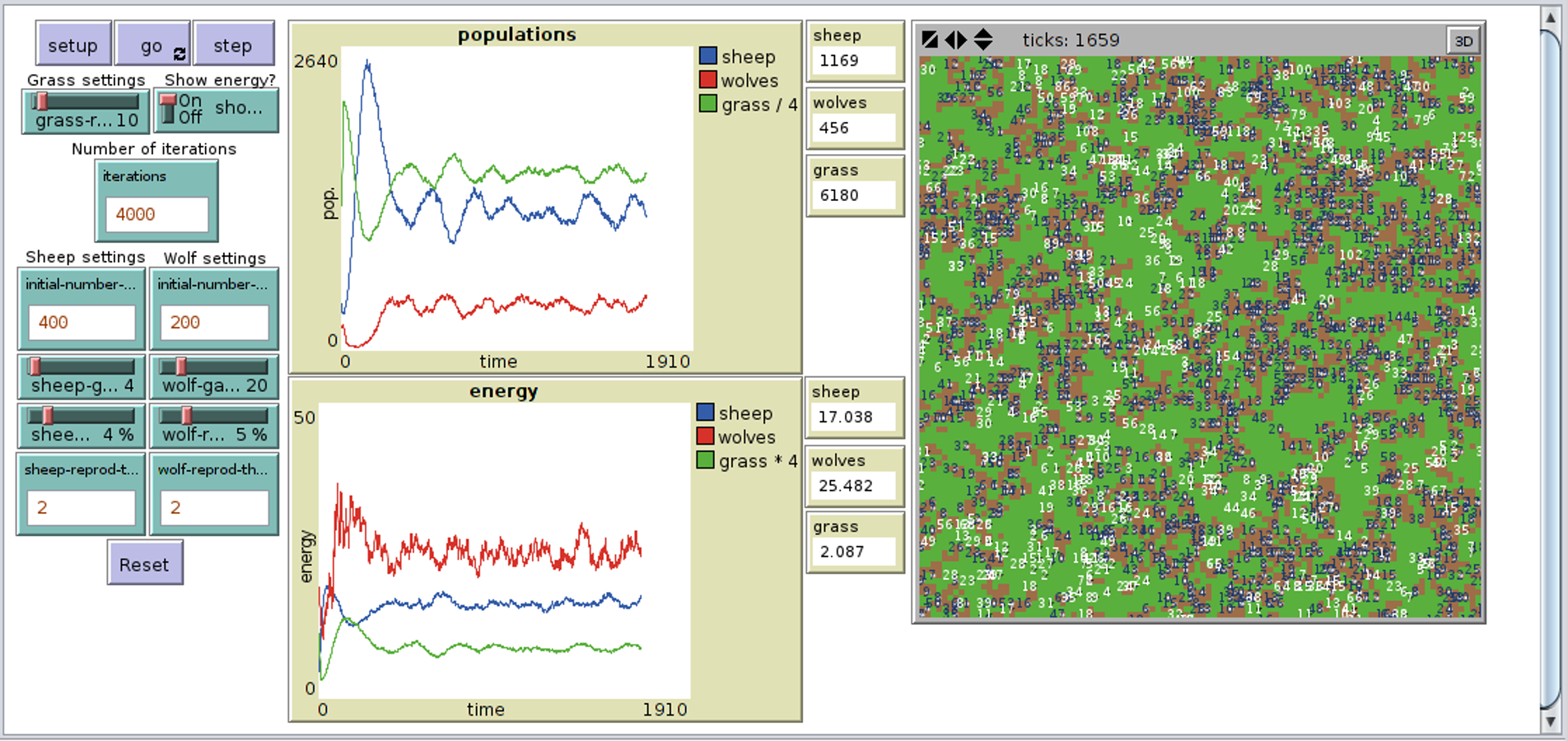

A truly autonomous agent can develop skills to enable it to succeed in such environments without giving it the ontological knowledge of the environment a priori. This approach is not always efficient especially when the agent’s environment is unknown or too complex to be represented algorithmically. The processes that constitute the designs and implementations of AI systems such as self-driving cars, factory robots and so on have been mostly hand-engineered in the sense that the designers aim at giving the robots adequate knowledge of its world. I report an experiment in which the agent learned to successfully interact with its environment and to avoid unfavorable interactions using regularities discovered through interaction I use GAIT to report and explain the detailed learning process and the structured behaviors that the agent has learned on each decision making step.

Moreover, I introduce an implementation of a toolkit to analyze the learning process at run time, which is called GAIT (Generating and Analyzing Interaction Traces Toolkit). Through its activity and these aspects of behavior (behavioral proclivity, situation awareness, and hierarchical sequential learning), the agent starts to exhibit emergent sensibility, intrinsic motivation, and autonomous learning. This situational representation works as an emerging situation awareness that is grounded in the agent’s interaction with its environment and that in turn generates expectations and activates adapted behaviors. The agent represents its current situation in terms of perceived affordances that develop through the agent’s experience. Furthermore, I propose a Bottom-up hiErarchical sequential Learning model based on the CCA, which is also called BEL-CA, as a solution for an autonomous agent learning hierarchical sequences of behaviors and acquiring capabilities of self-adaptation and flexibility. Following these drives, the agent autonomously learns regularities afforded by the environment, and constructs causal perception of phenomena whose hypothetical presence in the environment explains these regularities. In addition, I present two forms of self-motivation: successfully enacting sequences of interactions (or called autotelic motivation), and preferably enacting interactions that have predefined positive values (or called interactional motivation). Instead, I propose a way for the agent to autonomously encode the interaction experiences and reuse behavioral patterns based on the agent’s self-motivation implemented as inborn proclivities that drive the agent in a proactive way. Accordingly, I am not proposing an algorithm that optimizes exploration of a predefined problem-space to reach predefined goal states. In contrast with traditional cognitive architectures, the introduced model neither initially endows the agent with prior knowledge of its environment, nor supplies it with knowledge during its learning process. Meanwhile, the CCA allows a self-motivated agent to autonomously construct the perception of the environment and acquire capabilities of self-adaption and flexibility to generate proper behaviors to tackle with diverse situations in interacting with the environment. In this dissertation, I propose a computational model of Constructivist Cognitive Architecture (CCA) as a way towards simulating the early learning mechanism of infants’ cognitive development based on theories of enactive cognition, intrinsic motivation, and constructivist epistemology. Seeking ways to explain the learning mechanism behind infants’ early cognitive development and try to replicate some of these abilities that babies have for an autonomous agent have become a focal point of recent efforts in robotics and AI research.

In most traditional Artificial Intelligence (AI) approaches, learning is usually insufficient, with various biases, and lacks of flexibility. For most artificial agents (and robots), acquiring such abilities is overwhelming. These abilities of sense-making and knowledge construction of the environment set them apart from even the most advanced autonomous robots. Especially in the initial phase of cognitive development, they exhibit amazing abilities to generate novel behaviors in unfamiliar situations, and explore actively to learn the best while lacking extrinsic rewards from the environment. Infants are excellent at interacting with the environment.